The Alignment Files—Part 3: Intellectual Hitchhiking

Why AI-Accelerated Discovery Might Just Get You to the Wrong Destination Faster

This is Part 3 of The Alignment Files.

In Part 1, we named the three philosophical tribes at war for the product manager’s soul. In Part 2, we examined how the Factory’s gravity well turns excellent execution into disconnected performance theater.

Today, we confront a very new and more insidious problem: the promise that AI can close the Market Grounding gap without anyone having to leave their desk!

The Temptation

In the Pragmatic Framework, we talk about the NIHITO rule: Nothing Important Happens Inside The Office. The Market Grounding tribe lives by it.

Get outside. Talk to real clinicians. Sit in the workflow. Watch the workarounds. Validate the problem before the factory is activated.

But let’s get real with each other — NIHITO is HARD.

It is time-consuming to recruit clinicians for interviews. It is expensive to travel to sites. It is exhausting to conduct twenty conversations to find that one insight that persists and reframes the entire problem. And in a health system where the factory is hungry, and the next PI cycle waits for no one, the pressure to skip the fieldwork and just get something into the backlog is relentless.

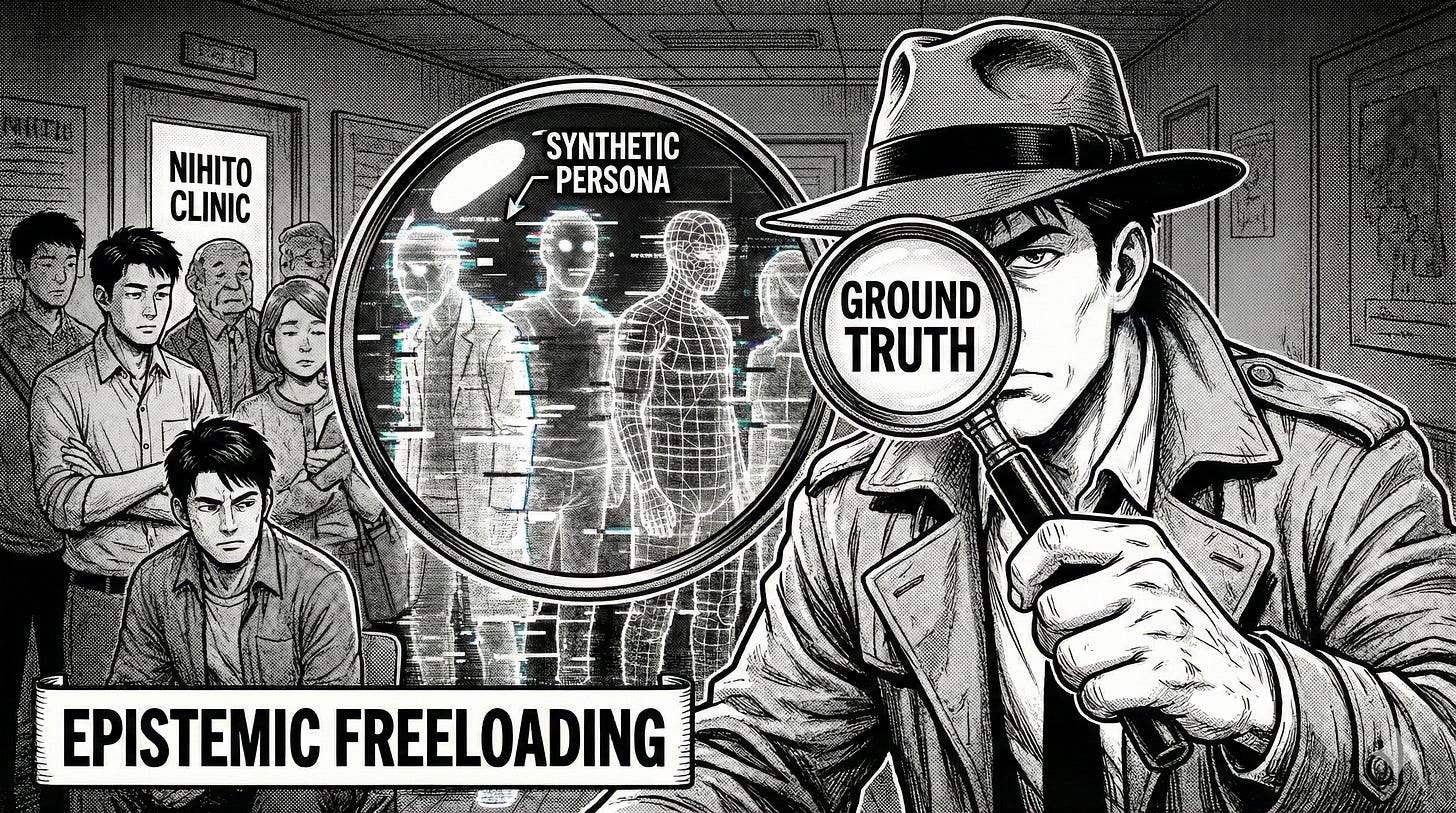

So along comes the Synthetic Persona. A few prompts, and AI generates a “Digital Twin” of your ‘customer’ that answers questions instantly, never pushes back on your scheduling, never cancels at the last minute, and costs nothing.

It feels like a breakthrough.

It is not a breakthrough. It is Epistemic Freeloading — intellectual hitchhiking. And it is quietly becoming the most dangerous shortcut in modern product management.

In celebration of the launch of Haverin Consulting, I’d like to offer 50% of a paid subscription. Thank you for supporting me.

What Is Epistemic Freeloading?

Simply put, it is wanting the authority of evidence without the labor of collecting it.

When a Product Manager uses AI to simulate a customer instead of actually talking to one, they are borrowing from the statistical average of the internet’s past record to predict their product’s future. They get something that “looks like” research. It has themes. It has quotes. It has a nice summary document.

It just doesn’t have grounded truth.

This is not the same as using AI to synthesize what you have already learned from real people. That is acceleration, and it is genuinely powerful.

But simulation is not synthesis. Simulation is substitution.

And the difference, while subtle in the workflow, is catastrophic in the outcome.

Here be Dragons!

Three invisible dangers compound over time:

Synthetic Stagnation. LLMs are trained on historical data. They know what happened yesterday; they cannot invent tomorrow. Testing your ideas exclusively against AI-generated personas will only ever get you “average” results.The statistical center of what has already been written, said, and thought. You lose the capacity for genuine discovery.

In healthcare, the next breakthrough often comes from an outlier observation in a clinical workflow nobody thought to study. A synthetic persona will never take you there. It will confidently take you to the middle of the road. Every. Single. Time.

Heterogeneity Collapse. Real humans are messy, irrational, and wonderfully contradictory. A night-shift ICU nurse and a Monday-morning outpatient clinic nurse occupy the same role title and inhabit entirely different clinical realities. AI personas are logical and flat. They represent the “statistically average” clinician — a person who does not actually exist. Build for that synthetic average, and you miss the outliers.

In healthcare product design, outliers are often where the most critical unmet needs reside.

The Echo Chamber. If the factory is building based on what an AI persona “said,” and that persona was constructed from the assumptions of the person who prompted it, congratulations — you have created a closed loop. You are asking an algorithm what it thinks about a product designed by an algorithm, validated by an algorithm.

The market — the actual, inconvenient, grumpy, contradictory market — is nowhere in the circuit.

Why AI Interviews Aren’t Interviews

There is a deeper methodological problem here that product teams don’t often consider.

In behavioral science, the Observer Effect describes how participants alter their behavior when they know they are being studied. In AI-driven research, this takes on a new and problematic dimension. Human users interacting with AI moderators experience “social desirability bias”. The AI provide the responses they think are expected rather than what they actually experience.

The result is what I’ll call Synthetic Emotion: affective expressions performed for the machine rather than genuinely felt. If your discovery process captures these adapted responses and treats them as ground truth, you are not studying your users. You are studying their performance of being studied.

In healthcare, this matters enormously. Trust is the currency of adoption.

A clinician will tell an AI chatbot that a new documentation workflow “seems fine.” That same clinician, in a face-to-face conversation — after the third coffee, when the formal interview is over, and the real talking starts — will tell you that the workflow adds 7 clicks to every patient encounter. And that they’ve already built a workaround on a Post-it note stuck to their monitor.

NIHITO is not just a research methodology. It is a trust-building exercise. The insights that matter most are the ones people only share when they believe you genuinely care about the answer.

The AI doesn’t ever see the Post-it on the monitor. It never will.

“But It’s Faster!”

The counterargument is always speed. A L W A Y S.

“We can generate insights in hours instead of weeks. We can test ten personas before lunch. We can move faster.”

And this is true.

AI-accelerated discovery is faster. The question nobody asks is: faster toward what?

Speed without ground truth does not close the gap between the factory and the market. It closes the gap between the factory and a simulation of the market. The factory builds faster. The product ships faster.

And that Zombie Product — live, yet not alive — arrives faster too.

Think about it through the lens of what we discussed in Part 2:

If the gravity well of the factory is the problem of building the wrong things efficiently…

…then Epistemic Freeloading is the problem of validating the wrong things efficiently

It is the Build Trap with an added veneer of research.

The roadmap looks evidence-based…But the evidence is synthetic. And the organization has no way to tell the difference until the product fails downstream — quietly, expensively, and with no one accountable for the gap.

In the language of Part 1’s tribes, Epistemic Freeloading is what happens when the Market Grounding tribe surrenders its methodology to the factory’s demand for speed.

The tribe still exists. It still has a seat at the table. But it has stopped doing the work that gave it authority.

It has become a hitchhiker, thumbing a ride on the factory’s momentum, borrowing the language of evidence without bearing the cost of collection.

So What Should AI Actually Do in Discovery?

None of this is an argument against AI in the discovery process. I use these tools daily. They are genuinely transformative…when pointed at the right problem.

The distinction is simple: AI is brilliant at synthesis. It is a hot mess to manage as a substitute.

The Workflow:

Stage 1 — Raw Collection (NIHITO. No shortcut.): Conduct real human interviews. In healthcare, 12–15 deep conversations with clinicians, administrators, and operational staff will surface the messy, emotional, contradictory reality that no model can simulate. This is the non-negotiable foundation.

HOT TIP: Ambient Scribing is one of the fastest adopted technologies in healthcare. USE IT!!!

After securing permission to record, I use a Plaud personal recording device to capture interviews. Within 10 minutes of finishing, I have a complete transcript of everything that was discussed, AND the AI has generated a targeted summarized output that analyzes against a specific product field interview template.

Stage 2 — AI-Powered Synthesis: Use AI to transcribe, cluster, and identify thematic patterns across those real conversations. This is where the speed advantage is legitimate and enormous. What used to take a research team two weeks can now be done in an afternoon.

Stage 3 — Dialogic Interrogation: Engage with the AI about the data. Ask it to surface contradictions. Flag outlier responses. Identify gaps in the interview set. The AI becomes a thinking partner, not a thinking substitute.

Stage 4 — Calibration: Validate the AI’s thematic conclusions against a subset of real human responses. If the machine’s patterns do not match the ground truth, you have caught the signal problem before the factory was activated.

AI is the accelerant. Humans are the fuel. Light the accelerant without the fuel and you don’t get a controlled burn. You get a flash and nothing left.

The Uncomfortable Truth

The real reason Epistemic Freeloading is spreading is not that Product Managers are lazy. Most of them are anything but.

It is that the organizational structure rewards the shortcut.

The factory demands a populated backlog. The PI cycle demands committed objectives. The executive team demands a roadmap by Thursday. In this environment, a Product Manager who says, “I need six weeks and access to fifteen clinicians before I can validate this,” is not supported and rewarded for rigor.

They are penalized for the delay.

And so the shortcut becomes the norm. AI personas replace field research. Synthetic validation replaces NIHITO. The backlog fills. The factory runs. And nobody asks whether the evidence underneath the roadmap is real — until the Zombie Products start piling up.

The fix is not to ban AI from discovery. The fix is to stop treating the factory’s appetite as the discovery team’s deadline.

BUT…that requires a mechanism. A way to distinguish between validated evidence and expensive guessing. A way to put a “truth score” on the roadmap so that leadership can see, at a glance, which initiatives have earned their place through evidence and which are riding on assumption and enthusiasm.

That mechanism is the subject of Part 4.

In Part 4: The Truth Score, we introduce the Validity Buffer — a practical tool for surviving the HIPPO (the HIghest Paid Person’s Opinion) and ensuring your roadmap is grounded in evidence, not enthusiasm.

Stuart Miller is Managing Director of Haverin Consulting, a healthcare IT strategy consultancy. This is Part 3 of The Alignment Files. Subscribe for Parts 4–5.